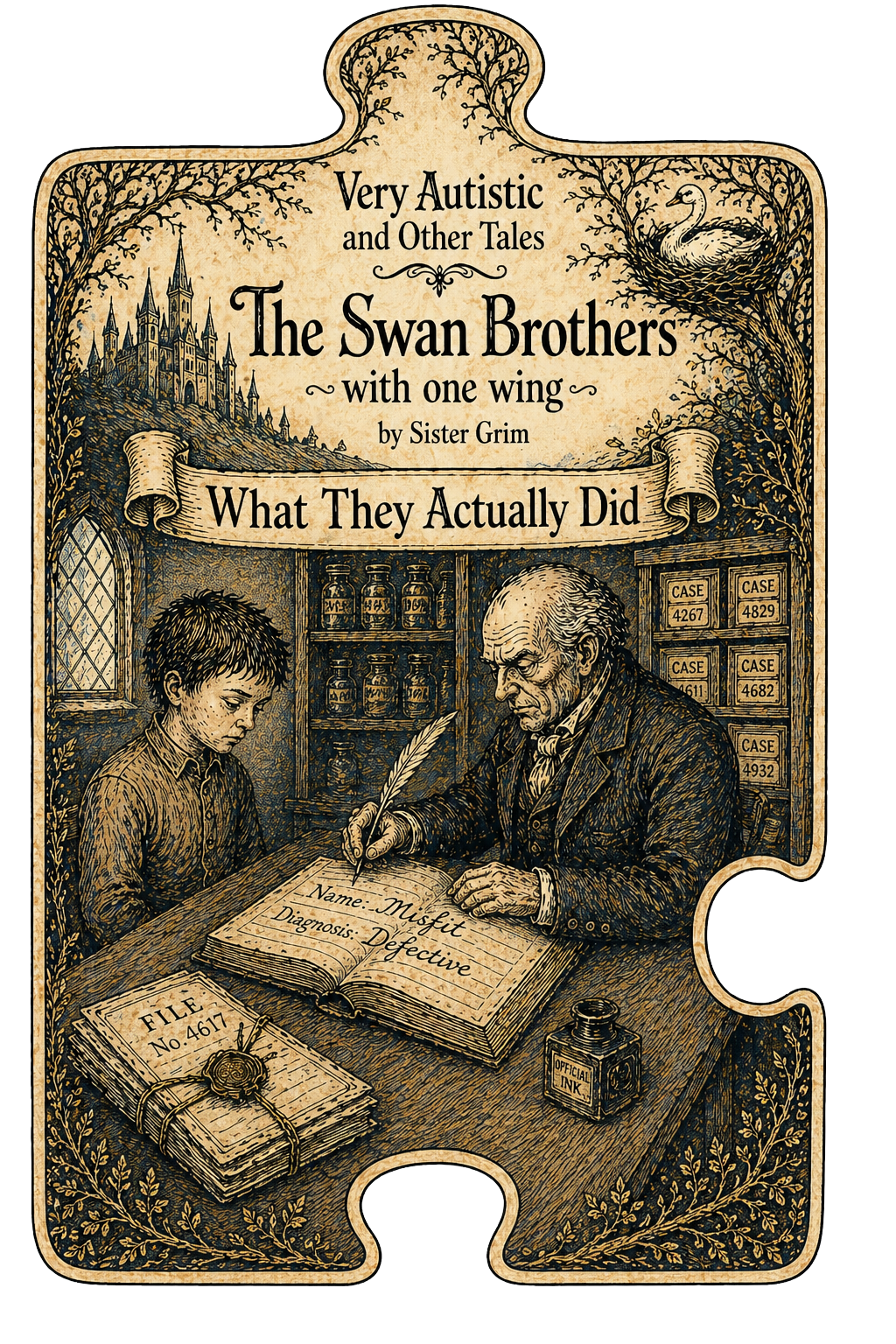

This is the plain-language version. It covers the same ground as the full technical document without the technical scaffolding — for the person who just got handed a label, for families, for advocates, for clinicians, for anyone who wants to know what this paper actually did and why it matters.

The full argument, with citations, is in The Brother With the Wing.

What Happened

In July 2025 a paper was published in one of the most prestigious science journals in the world — Nature Genetics. It came from Princeton University. It was funded by the Simons Foundation. Autism Speaks called it groundbreaking. The headline in Scientific American said: "Four New Autism Subtypes Link Genes to Children's Traits."

It said researchers had found four biologically distinct types of autism. Each with its own genetic program. Each pointing toward precision medicine and better diagnosis.

Families read it. Clinicians read it. Policy makers read it. It went everywhere.

Here is what actually happened.

What They Actually Did — Step by Step

Before any child was measured, the researchers chose the questions.

Three questionnaires. Here is what each one is, who built it, and what is wrong with it.

The SCQ — Social Communication Questionnaire. Built by Rutter, Bailey, and Lord in the 1990s. These are the same researchers who were central to defining what autism is in the diagnostic literature. They decided what autism was. Then they built a tool to measure it. The tool encodes their definition. Using it to discover what autism is is like using a map to prove the map is correct. The SCQ measures distance from neurotypical social behaviour. It cannot measure autistic communication on its own terms because it was never designed to. Known to systematically underidentify autistic girls. Performs poorly for Deaf children. Cannot see through masking. Validated against the ADI-R — another instrument built on the same assumptions by the same tradition — which means it was validated within a closed circle, not against independent evidence.

The RBS-R — Repetitive Behavior Scale Revised. Built by Bodfish and colleagues. It was not designed specifically for autism — it was developed to assess repetitive behaviours in people with intellectual disability, with and without autism. That matters because it means the instrument was built around assumptions about intellectual disability populations and then applied to autism research. Every single item has a negative valence. There is no neutral item. No positive one. The scale cannot ask why a behaviour is present. It records that it is there and how much. It is primarily a research instrument. Many clinicians have never used it in practice.

The CBCL — Child Behavior Checklist. Built by Achenbach as a general psychopathology screener. Achenbach's framework defines child mental health as conformity to normative developmental standards. He decided what normal child development looks like. Then he built a tool to measure deviation from it. Not designed for autism. Heavily influenced by caregiver stress — a frightened or unsupported parent fills it in differently than a supported one. Cannot separate autism presentation from trauma response, from co-occurring conditions, from the effect of an unsupportive environment.

None of these instruments were built with autistic input. The people who built them decided what autism was before they built anything. The tools measure what their creators believed. Other instruments exist that measure what autistic people can do, what supports help them thrive, what they experience. Those instruments were available. They were not chosen.

The researchers then grouped all 239 items into seven categories — imported directly from the same literature these instruments came from. The categories existed before the model ran. The model found what the questions were built to find.

That is where this study starts. Not with 5,000 children. With the decisions of the people who built the questions those children's parents were asked to answer.

They took a large group of autistic children — over 5,000 — from a US research database called SPARK. Every child already had an autism diagnosis before any of these questionnaires were administered. The questionnaires did not diagnose anyone. They sorted people who were already diagnosed into new boxes.

SPARK is funded by the Simons Foundation — the same organisation that funded this paper. The funder of the research and the funder of the database that provided the data are the same organisation. That is worth knowing.

They asked the children's parents to fill in the three questionnaires described above. Parents ticked boxes. The boxes had been built to find deficit, deviance, and distance from neurotypical norms.

They fed all those ticked boxes into a computer model. The model's job — the only job it has — is to find groups in data. The model is built to look for groups. Finding groups is not the same as proving those groups are real biological types.

The model found four groups. Unsurprisingly the groups looked like this:

- A group where parents ticked a lot of boxes across everything

- A group where parents ticked boxes mainly for social and behavioural things

- A group where parents ticked boxes for developmental delay and language

- A group where parents ticked fewer boxes overall

The researchers say the model was unsupervised — meaning it wasn't told in advance what the groups should look like. That is technically true. What happened next was not unsupervised.

After the model produced multiple possible groupings — the paper tested up to twelve class solutions — the researchers consulted clinical collaborators to decide which one was most interpretable. The paper states this directly:

This is actually how psychiatric diagnosis has always worked. The DSM — the manual that defines every psychiatric condition — was built the same way. Committees of clinicians looked at clusters of symptoms and voted on which groupings made clinical sense to them. The DSM's own architects acknowledged that its categories were products of expert consensus, not scientific understanding. The head of the National Institute of Mental Health said publicly that DSM categories lack validity because they are based on symptom consensus rather than biological data.

The Litman paper did what the DSM always did. The algorithm was unsupervised. The selection of which algorithmic output to use was supervised by clinicians trained in the same tradition that built the instruments. Those clinicians chose the grouping that most resembled what they already understood autism to look like. That is not a criticism of the individual clinicians. It is a documented feature of how clinical judgment works when selecting between competing classification solutions.

The four classes were not discovered. They were selected. By people who already knew what they were looking for. The paper calls this biological discovery. The history of psychiatric diagnosis calls it how categories have always been built.

Subgroups can be useful for research. The problem is calling questionnaire-based groups biological subtypes before that biology has actually been shown.

They looked at the DNA of people in each group. But not all of them.

The genetic findings this paper calls biological programs were run on 2,294 children out of 5,392. Fewer than half. And only children who self-reported their race as white. The polygenic scores used population data from European ancestry studies — they do not work the same way in other populations, which is why the researchers restricted to white children. Every child in the study received a class label. The biology was only checked in some of them. The paper states this in its own methods section. It does not state it in the press release.

They used genetic scores calculated from massive studies of other people's genomes — hundreds of thousands of people — not from anything new they found in these children specifically. They found that the groups where parents ticked more boxes had higher scores on those genetic measures. The groups where parents ticked fewer boxes had lower scores.

There is one more thing worth knowing about those genetic scores. The paper itself states:

Not one of the four classes. The genetic signals that differentiated the groups were for ADHD, educational attainment, IQ, and depression — not for autism itself.

They called those four groups biological subtypes. They called the genetic differences genetic programs. They called it precision medicine. Princeton issued a press release. Autism Speaks called it groundbreaking.

What the Computer Actually Is — and What It Actually Did

The computer model the researchers used has a name: StepMix. It is a free, open-source software package anyone can download at no cost. It was developed by researchers at a Canadian university — Université Laval — and is available publicly on GitHub. It is not a special proprietary biological discovery tool developed by Princeton. It is a statistics package that any researcher with a laptop and a dataset can download and run today.

StepMix is a type of model called a mixture model. You give it a set of measurements — in this case, the answers from three parent questionnaires — and it tries different ways of dividing the people into groups until it finds the arrangement that best fits the data by a mathematical score. The critical thing to understand is this: the model is built to look for groups. The question is never whether groups exist. The question is whether the groups reflect something real in the world — or whether they reflect the structure of the measurement, the assumptions of the model, and the noise in the data. That question is not answered by running the model. It requires additional evidence the paper does not provide.

The model sorted 5,392 children's parent questionnaire responses into four groups based on the patterns in those responses. The researchers named them Broadly Affected, Social/Behavioral, Mixed ASD with Developmental Delay, and Moderate Challenges. They then looked at the genetic data and found that groups with higher questionnaire scores had higher average genetic liability scores — which is expected because both are measuring the same underlying gradient. They called those differences genetic programs.

The sophistication of the computation does not change what the data was. A mixture model finds patterns in what it is given. It was given questionnaires. It found patterns in questionnaires. The genetic analysis described the averages of the groups those patterns produced.

You can tell anyone who cites this paper's authority in a clinical meeting, a school meeting, or a funding decision: the groups were found by a free software package applied to parent behaviour checklists. The groups were named by the researchers after the software ran. The genetics described the averages of the named groups. There is no biological test that places any child in any group.

What about the replication?

The paper replicated at 0.927 correlation in a second cohort. That number gets cited as proof the findings are real. Here is what it actually proves.

The replication used the same instruments — the SCQ, the RBS-R, the CBCL — applied to similar families in a similar cohort. The same questions built on the same assumptions given to similar people produced similar patterns. Of course they did. That is what consistent instruments do. 0.927 tells you the questionnaires are reliable. It does not tell you the groups are biologically real. A ruler used twice in the same room gives you the same measurement both times. That does not mean the room is the right size.

To replicate a biological finding you need to find the same thing using different methods. The paper did not do that. It found the same questionnaire patterns using the same questionnaires. That is replication of a measurement. Not replication of a biology.

The Problem

Parents who ticked more boxes have children with higher average genetic scores.

That is the finding.

That is not a surprise. The genetic scores are designed to measure how much genetic liability someone has for neurodevelopmental conditions. Of course people who score high on neurodevelopmental behaviour questionnaires also score high on genetic liability measures for neurodevelopmental conditions. They are measuring the same thing from two different angles.

The boxes and the genetic scores are measuring the same gradient. The paper found that the gradient is real — which everyone already knew. Then it cut that gradient into four pieces and gave each piece a name. Then it called each name a biological subtype with its own genetic program.

Cutting a gradient into pieces does not create four distinct biologies. It creates four labels for four positions on one continuous line.

This Has Been Tried Before — And The Evidence Shows What Happened

The field of autism research moved away from discrete categories to the spectrum — autism spectrum disorder — in 2013. That shift is documented and the reasons for it are in the peer reviewed literature.

Before 2013, autism was divided into subcategories: Autistic Disorder, Asperger's Syndrome, PDD-NOS, and others. Each carried different clinical weight. Each attached to different service eligibility in different states and countries. Each implied something different about a person's capacity and need. That is a tiering system. It operated for seventeen years.

The DSM-5 workgroup documented four specific failures of that system that drove the decision to collapse it. Multiple studies showed the subcategories were unreliable — the same person got different diagnoses from different clinicians. The subcategories had poor predictive power — they did not predict different outcomes. The symptom presentations overlapped substantially across the categories — there was no clean biological or clinical boundary between them. And critically — the subcategories created restrictions on treatment eligibility and service coverage that caused documented harm to people at both ends.

Children with Asperger's diagnoses were denied services because the label implied their deficits were subtle. Some states provided services for Autistic Disorder but not for Asperger's. Clinicians documented spending hours advocating for children with PDD-NOS because school systems had no clarity on what the diagnosis meant or what support it warranted. People with higher-support-need labels were harmed by how the category structure shaped public perception and funding priorities. The tier divided the community against itself and caused harm to people at both ends.

A DSM workgroup member stated publicly that there was no robust, replicated body of evidence to support the diagnostic distinction between the categories after seventeen years of their use. The evidence said the categories were not real. The support tiers built on them were causing harm. The DSM moved to spectrum.

This paper takes the spectrum and runs it through a sorting machine and produces four boxes and calls it progress. The boxes are not new. The names didn't come from the data. The community already won this argument once. This paper is trying to move it back. It just has a better computer this time.

What a Real Biological Finding Would Look Like

When medicine finds a real biological subtype it finds something specific. Something that exists in your biology individually. Something that can be measured in you and used to understand what is happening in your body.

HER2 positive breast cancer is a real subtype. Doctors can test your tumour. The test tells them specifically whether your cancer will respond to a particular treatment. The biology identifies you individually. It changes what happens to you specifically.

PKU — phenylketonuria — is a real biological finding. A specific enzyme doesn't work properly. Doctors can test for it. There is a direct dietary intervention that addresses the specific mechanism. The biology names what is wrong and points to what helps.

The Litman paper found none of this for any of its four groups.

There is no test you can run on a child that places them in one of these four groups from their biology. The group is assigned from questionnaire scores. The genetic findings describe averages across groups — not anything measurable in an individual child. No mechanism was identified. No pathway was found. No therapeutic target was named.

What the Names Actually Mean

The researchers had to decide what to call the four groups the computer model produced. The model doesn't produce names. The researchers chose them.

They chose: Broadly Affected. Social/Behavioral. Mixed ASD with Developmental Delay. Moderate Challenges.

Broadly Affected means: this child's parents ticked a lot of boxes across all three questionnaires. That is all it means. The name sounds like a description of the child's neurology. It is a description of the questionnaire scores.

Moderate Challenges means: this child's parents ticked fewer boxes than the other groups. That is all it means. The paper did not find a distinct biology for this group. It found a group that scored lower and also had lower average genetic burden — which is what you would expect if you cut any continuous gradient into pieces. The lower end has lower scores. That is not a biological subtype. That is arithmetic.

Mixed ASD with Developmental Delay encodes within its name the assumption that the developmental delay is a feature of the autism — rather than a separate condition that might have its own cause and its own treatment that nobody is looking for because the child has been assigned a genetic program instead.

Social/Behavioral locates the difficulty in the child's social behaviour and conduct — not in the environment, not in what is being demanded of them, not in the sensory experience nobody asked about, not in the communication differences the questionnaire couldn't reach.

The names were chosen by the researchers after the statistics were run. The statistics produced gradient positions. The researchers produced clinical identities. Those are different things. And the clinical identities travel in files. They precede the child into every room they enter.

The Genetic Scores Came From Other People

The genetic scores the paper used — called polygenic scores — are not calculated fresh from the children in this study. They are derived from enormous studies of hundreds of thousands of other people, looking at which common genetic variants appear more often in people who have certain conditions.

The score for any individual child is a statistical estimate built from other people's data. It tells you roughly where that child sits relative to the population average for genetic liability. It is not a measurement taken from that child and used to identify them individually. It cannot place any child in any group from their biology. It is a population-level tool, not an individual diagnostic.

When the paper reports that Broadly Affected has a higher average polygenic score than Moderate Challenges, it is reporting the average of a population-derived statistic across groups defined by questionnaire scores. The children with more boxes ticked have higher scores on a measure built to correlate with the kinds of things that produce more ticked boxes. That is expected. It is not a fingerprint.

What About the Brain Findings?

The paper compared gene lists associated with each class against a published database of which genes are active in which brain cell types at which stages of fetal development. The database was built from postmortem human prefrontal cortex tissue. The comparison tells you whether the gene list overlaps — more than expected by chance — with genes known to be active in the developing brain at particular times.

That is a real finding. What is a problem is what they called the result.

No brain was scanned. No living brain was measured. No tissue from any child in this study was examined. What was done was a comparison between a statistical gene list and a database of donated fetal tissue from people who died. That comparison was called a genetic program for a living child.

The finding is interesting as a hypothesis about developmental timing in a population. It is not a fingerprint. It is not a clinical finding about the person in the room. Your child is not a population.

Who Is Actually in These Groups

The groups were built from questionnaire scores. Questionnaire scores can be high or low for many different reasons that have nothing to do with a child's autism subtype.

A child might score high across all three questionnaires — landing in Broadly Affected — because they have catatonia, which is a real medical condition that occurs in autism, is treatable, and produces exactly the profile that scores high on all three instruments, and which the paper has no mechanism to identify. Or because they are Deaf and the SCQ was built for hearing children. Or because they have Cerebral Palsy and their motor differences register across multiple questionnaire domains. Or because their parent was frightened and exhausted on the day they filled in the form. The paper cannot distinguish between them. Every one of those children gets the same label. The same genetic program. The same clinical tier.

A child might score lower — landing in Moderate Challenges — because their support needs are real but not visible to a caregiver-report questionnaire measuring surface behaviour. Because they mask. Because they use AAC and the instruments were not built for them. Because their cultural background means the questionnaire's assumptions about social interaction don't match how their family communicates.

That child gets a label that says their challenges are moderate. The label travels. The systems use it to decide what support they receive.

The Neurodiversity Movement Already Knew

The paper treats everyone in its cohort as a single-condition population — autistic, full stop — whose heterogeneity can be partitioned into four autism types. That misspecifies who is actually in the cohort. The groups the model found may not be autism subtypes at all. They may be clusters of different combinations of co-occurring neurodivergences — each with their own distinct biology, their own interactions, their own support needs — that the instruments are reading as one undifferentiated autism profile.

The neurodiversity movement knew this and said it clearly for decades before this paper was designed. Every time autism heterogeneity gets formalised into tiers the same thing happens. The people with the highest support needs get sorted into a category used to place limits on them. The people whose presentations are most legible to neurotypical observers get sorted into a category used to argue they don't need support. Both ends cause harm. The ND movement predicted exactly this. It was not listened to. It was not included in designing the research.

What Happens When This Gets Used Clinically

There are no class-specific treatments. There are no interventions that exist for Broadly Affected that don't exist for Moderate Challenges. The things that help autistic people — speech therapy, treatment for catatonia, AAC, sensory accommodations, reduced demand environments, community support — none of those are prescribed differently based on which of these four groups you are in. The paper acknowledges this. It says future research could examine how interventions may differ among the classes.

The labels are not yet embedded in clinical systems. The paper is seven months old. But this is how classification systems enter clinical use — not all at once, but through citation and repetition until the language is everywhere and nobody remembers where it came from. The NAS said this classification could be used to cut support to people who need it. They said that because they have seen how classification systems travel.

The Label Goes to School Too

The label does not have to be formally embedded in clinical systems to travel. Classification language moves through conversation, through reports, through the citations researchers and clinicians use to anchor their thinking.

An IEP is built on assessments of where a child is now and projections of where they might go. A label that says Broadly Affected — attached to what sounds like a biological finding from a prestigious genetics paper — can enter that room before the child does. A parent who read the Scientific American headline brings it in. A clinician who saw the coverage mentions it in an assessment report. Nobody has to act in bad faith. The label carries institutional authority. It sounds settled. It sounds like something discovered in biology rather than something produced by a free software package applied to parent-ticked questionnaires.

Research has shown that a third of autistic children labelled as low functioning score in the average range when tested with different methods. The label shapes the test. The test confirms the label. Educational decisions made from a biologically-framed classification can close doors that the child's actual capacity would open — if anyone looked for it.

The label is in a file. The file will travel. You have a right to know what it is based on — and what it is not based on.

What This Means For You

If you or your child has received one of these labels — Broadly Affected, Moderate Challenges, Mixed ASD with Developmental Delay, Social/Behavioral — here is what you should know.

That label did not come from your biology. There is no test that was run on your DNA or your brain that placed you in that group. The group was assigned based on how a caregiver answered three behaviour questionnaires on a particular day.

The genetic program attached to that label is not a finding about your specific neurology. It is a description of the average genetic characteristics of people who answered similar questionnaires similarly. It says nothing specific about you.

And if you or your child is not white, the genetic analysis was not run on people like you at all. The genetic programs were derived from white children only. The label was applied to everyone.

The label does not tell you what you need. It tells you where you sat on a gradient defined by questionnaire scores. Those are different things.

You are more than what three questionnaires could see on one day. Your neurology is not a genetic program derived from your parent's answers to behaviour checklists. Your support needs are not determined by which quartile of a questionnaire score distribution you landed in.

Why This Document Exists

The researchers who published this paper have institutional backing. Princeton University. The Simons Foundation. Nature Genetics. Peer review. Press offices. Autism Speaks calling it groundbreaking.

The people being classified by this paper have files that say Broadly Affected.

The accountability processes that govern research — peer review, ethics approval, citation metrics — are accessible to researchers and structurally inaccessible to the people the research classifies. The people most affected by this classification have the least power to contest it through the channels that determine whether it stands.

Nothing about us without us is not a slogan. It is a legal principle embedded in the United Nations Convention on the Rights of Persons with Disabilities, which requires that research classifying disabled people include those people in its design. The paper did not. The instruments were chosen without autistic input. The classes were built without autistic input. The names were chosen without autistic input.

The National Autistic Society — the organisation that actually represents autistic people in the UK — said this classification has no current clinical value and could be used to cut support to people who need it.

Autism Speaks said it was groundbreaking.

Those two responses tell you everything you need to know about whose interests this paper serves.

The Short Version

A prestigious paper asked parents to tick boxes about their autistic children's behaviour using questionnaires built by researchers who had already decided what autism was. A free software package sorted the children into four groups based on those boxes. Clinical collaborators from the same research tradition then decided which grouping made the most sense to them.

The genetic analysis was run on fewer than half the children — white children only. Everyone got the label. The biology was only checked in some of them.

The children with more boxes ticked had higher scores on genetic measures built from other people's population data — which is expected, because both are measuring the same thing. The paper called the four groups biological subtypes and called the genetic differences genetic programs.

It found no biological test that identifies any child's group from their DNA. It found no brain markers measurable in living individuals. It found no mechanisms. It found no therapeutic targets. It found no basis for class-specific interventions.

It found that parents who ticked more boxes have children with higher average scores on population-derived genetic measures.

That is not a genetic fingerprint. That is a gradient. That gradient was cut into four pieces. The pieces were given clinical names. The names are in circulation. The authority is attached. The trajectory is clear.

The field moved to spectrum in 2013 because discrete categories were proved wrong. This paper is trying to move it back. It has a better computer this time. The categories are the same.

That is what they actually did.

Sister Grim — Very Autistic and Other Tales / Human Support Network — April 2026