Companion to: The Fairy Tale Version and The Theory Produced the Results (Human Support Network, March 2026). Those documents establish the historical record and the funding and legal architecture. This document goes somewhere they deliberately left space for: into the specific science of one high-impact paper, what it cannot see, and who pays the price for what it cannot see.

The plain-language version of this argument is available in What They Actually Did.

Executive Summary

In July 2025, a paper published in Nature Genetics claimed to decompose autism's heterogeneity into four biologically distinct subtypes — Broadly Affected, Social/Behavioral, Mixed ASD with Developmental Delay, and Moderate Challenges — each with its own genetic program, and presented those programs as the foundation for precision clinical diagnosis and care.

What the paper actually did was sort autistic children into four groups based on how their parents answered three behaviour questionnaires. It then found that groups defined by higher questionnaire scores had higher average genetic liability scores — scores calculated from other people's population-level genomic studies, not from individual biological measurement. It found that higher-scoring groups had higher rates of rare genetic mutations, without identifying what those mutations share mechanistically. It found that genes statistically associated with group membership overlap with brain development reference databases in ways that differ across groups — without scanning or measuring anyone's brain.

These are real findings. The paper's language is not a real description of them.

What the paper's language implies — programs, fingerprints, biologically distinct, precision medicine — is that something was found in the biology of individual children that identifies their subtype, explains their presentation, and will guide their treatment. Nothing of that kind was found. There is no biological test that places any child in any class. The classification comes entirely from questionnaire scores. The genetic signals describe group-level averages on a continuous liability gradient. They do not identify mechanisms. They do not point to therapeutic targets.

The Moderate Challenges class — the largest, at 1,860 people — illustrates the problem most precisely. The paper did not find a distinct biology for this group. It found a group that scored lower on three questionnaires and also had lower average genetic burden. Lower quantities of the same signals is not a distinct fingerprint. It is a position at the lower end of the same gradient.

The fundamental problem is that the paper's findings show graded differences in genetic burden across four groups defined by questionnaire scores. Graded differences on a continuous distribution are not discrete biological subtypes with independent mechanisms. They are positions on a gradient. The paper's instruments cannot separate intersecting neurodivergences. Its cohort systematically underrepresents the people most affected by its classifications. Its validation is circular. Its replication used the same instruments in demographically similar cohorts. The autism-specificity of the four classes was never tested against a comparison neurodevelopmental population.

The clinical consequence is a tiering mechanism operating in systems where tiering means rationing, with no class-specific interventions to deliver — because the findings do not identify mechanisms from which interventions could be derived. The NAS named this risk directly. It is not a remote possibility. It is the predictable trajectory of a prestigious classification with no mechanism stopping it.

The argument is not anti-science. It holds the paper to the standard of its own claims.

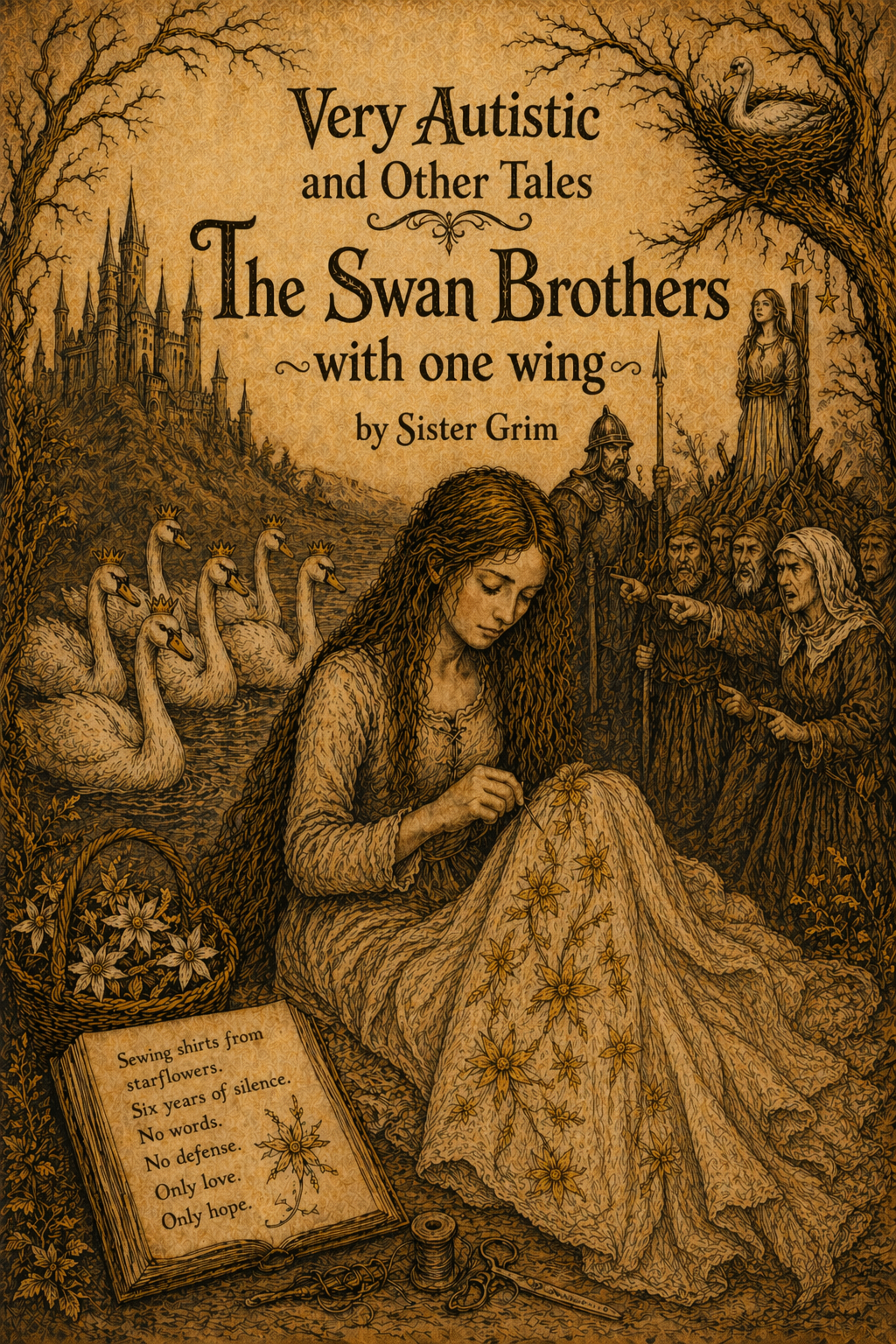

I. The Curse

In the Brothers Grimm story of the Six Swans, a king's children are transformed by a curse into birds. They can only become themselves again if their sister completes an impossible task in total silence for six years — sewing shirts from starflowers, never speaking, never defending herself, even as she is accused of murder and led to the stake. She knows the truth. She cannot say it. The world around her makes decisions about her brothers based entirely on what they appear to be.

In July 2025, a paper was published in Nature Genetics. It was called "Decomposition of phenotypic heterogeneity in autism reveals underlying genetic programs." It accumulated hundreds of thousands of accesses and dozens of citations within months of publication. It claimed to resolve what autism research had been struggling with for decades: how to meaningfully classify the enormous diversity of autistic people into coherent groups with distinct biological underpinnings.

It produced four classes. It called them clinically meaningful. It matched them to genetic programs. It replicated them in an independent cohort with a correlation of 0.927 and declared victory.

Dr Judith Brown of the UK's National Autistic Society — the country's largest autism charity — stated that such subtyping ideas "remain entirely theoretical and currently have no clinical value, diagnostic relevance or practical application." In some cases, she warned, such approaches "could be used to cut support to people who need it." The paper was published anyway. The citations accumulated. The genetic programs were named.

The sister's brothers are still swans.

II. What the Paper Claims

The researchers at Princeton's Troyanskaya lab took data from SPARK — a nationwide US cohort of 5,392 autistic children and their families — and ran it through a generative finite mixture model (GFMM). Their inputs were 239 features drawn from three questionnaires: the Social Communication Questionnaire (SCQ), the Repetitive Behavior Scale-Revised (RBS-R), and the Child Behavior Checklist (CBCL), plus a developmental history form completed by parents.

The model produced four classes: Broadly Affected, Social/Behavioral, Mixed ASD with Developmental Delay, and Moderate Challenges. The authors then analysed genetic data — polygenic scores, de novo variants, rare inherited variants, developmental gene expression timing in prefrontal cortex cell types — and found that each class had a different genetic fingerprint. They concluded that these analyses suggest specific biological dysregulation patterns and mechanistic hypotheses underlying autism's heterogeneity — and presented those suggestions as the basis for clinical application.

The paper's language overstates what the data can support. What it found was graded differences in genetic burden across four groups defined by caregiver-reported questionnaire scores. Graded differences on a continuous distribution are not distinct biological subtypes with independent mechanisms. A fingerprint identifies. A fingerprint is specific. What was found here is that parents who ticked more boxes on three questionnaires have children with higher average population-derived liability scores. The boxes and the scores are measuring the same underlying gradient. Naming the upper end Broadly Affected and the lower end Moderate Challenges, and calling those names genetic programs, does not produce two biological subtypes. It produces two labels for two positions on one continuous distribution.

What This Paper Does and Does Not Establish

The paper sorted autistic children into four groups based on how their parents answered behaviour questionnaires. It then found that groups defined by higher questionnaire scores also had higher average scores on a genetic liability measure derived from other people's population data. It found that groups with higher questionnaire scores had higher rates of rare genetic mutations, without identifying what those mutations share mechanistically. It found that genes statistically associated with group membership overlap with brain development reference databases in ways that differ across groups — without measuring anyone's brain directly.

These are real findings. They do not establish biological subtypes with distinct mechanisms. They do not establish individual-level biomarkers — there is no biological test that places any person in any class from their DNA. They do not establish class-specific interventions, because no mechanisms were identified. They do not establish that the groups are specific to autism rather than generic neurodevelopmental liability gradients. They do not establish temporal stability, because the data is cross-sectional.

The paper's language describes something the data did not find. The framework is insufficient from the ground up — not because the science is dishonest, but because the questions the paper needed to answer in order to support its conclusions are questions it did not ask.

III. The Model Cannot Find What Isn't There

Two distinct arguments run through this section. The first is ontological: the four classes are not demonstrated to be real biological subtypes. They are reproducible latent-class summaries of instrument performance in these cohorts. The second is clinical: even setting the ontological question entirely aside, the paper's clinical application language precedes the validation required before a classification tool built on these classes could be considered safe for clinical use.

A defender who concedes the ontological point does not thereby escape the clinical one.

Clustering Is Not Ontology

The GFMM is designed to partition heterogeneity in complex, high-dimensional data. Finite mixture models will recover cluster solutions from data that is partly continuous, partly overlapping, and partly artifactual — because partitioning is what they are built to do. The output is therefore only as ontologically meaningful as the evidence that the resulting classes remain valid across alternative measurement choices, longitudinal follow-up, and clinically independent data.

Autism was built as a spectrum deliberately. The community fought for years to move from discrete categories — Kanner's autism, Asperger's, PDD-NOS — to a spectrum. Not because it was politically convenient. Because the evidence required it. The DSM-5 workgroup documented four specific research-based reasons for the shift in 2013. First, multiple studies showed substantial variability in symptoms within and between diagnostic subgroups with similar core presentations. Second, the subcategories showed poor predictive power on later outcomes. Third, there was poor diagnostic reliability in assigning subcategory diagnoses. Fourth, and most directly relevant: the subcategories created documented restrictions on treatment eligibility and service coverage that caused harm at both ends of the tier.

Children with Asperger's diagnoses were denied services because the label implied their deficits were manageable. Some US states provided services for Autistic Disorder but not for Asperger's. The community divided against itself. A DSM-5 workgroup member stated publicly that after seventeen years of use, there was no robust, replicated body of evidence to support the diagnostic distinction between the categories. The evidence said the categories were not real. The harm they caused was real. The DSM moved to spectrum.

This paper takes that spectrum and runs it through a sorting machine and produces four boxes and calls it a paradigm shift. The boxes are not new. They are the old administrative divisions with better statistics applied to them. The paper rebuilds the old administrative divisions. It uses data shaped by those same wrong categories, runs better statistics on them, and calls the result underlying biological programs.

Who Decided Four Was the Right Number

The paper describes the GFMM as an unsupervised approach. This is technically accurate and incomplete. The paper's own methods section states:

"We consulted clinical collaborators on the interpretability of multiple candidate models and found that the four-class solution offered the best phenotypic separation and most clinically relevant classes."— Litman et al., Nature Genetics, 2025 — Methods section

"There was not one clear indicator for this parameter choice."— Litman et al., Nature Genetics, 2025 — Methods section

The paper tested up to twelve class solutions before settling on four. This is how psychiatric diagnosis has always worked. The DSM was built the same way — committees of clinicians looked at clusters and voted on which groupings made clinical sense to them. Hyman (2010) documented that DSM-III diagnoses were "perforce, the products of expert consensus, not the result of deep scientific understanding." Thomas Insel stated publicly that DSM categories lack validity because they are based on symptom consensus rather than biological data.

The Litman paper did what the DSM has always done. The algorithm was unsupervised. The selection of which algorithmic output to use was supervised by clinicians trained in the same tradition that built the instruments. Those clinicians chose the grouping that most resembled what they already understood autism to look like. The four classes were not discovered from the data. They were selected from the data by people who already knew what autism was supposed to look like.

The Genetic Analysis Was Not Run on Most of the Children

The paper's central claim is that four biological programs underlie the four classes. That claim rests on the genetic analysis. The genetic analysis was not run on 5,392 children. It was run on 2,294 of them — fewer than half. And only children who self-reported their race as white, because the polygenic scores used population-level GWAS data derived from European ancestry samples, which does not transfer reliably to other ancestries.

Every child in the study received a class label. The biological programs attached to those labels were derived from fewer than half the children. The paper states this in its methods section. It does not state it in its abstract or in any of the clinical application language. The press release does not mention it.

A classification presented as biologically grounded, whose biological grounding was tested in fewer than half the participants and exclusively in white participants, cannot make universal biological claims. The genetic programs are the genetics of white children who scored particular ways on three questionnaires. They have been attached as labels to everyone — including children whose biology was never examined — and they are travelling into clinical systems that will apply them to children from every background.

The Autism PGS Finding

There is a finding within the polygenic score analysis that the paper reports but does not foreground:

"Notably, none of the classes had a statistically significant signal for the autism PGS, owing to the high variance of this score across our cohort and their siblings."— Litman et al., Nature Genetics, 2025 — Results section, p. 1615

Not one class. The classes the paper calls autism biological subtypes are not differentiated by autism genetics. The genetic signals that differ across classes are for ADHD, educational attainment, IQ, and major depression — not autism itself. The classes are differentiated by the genetics of general cognitive ability and co-occurring conditions. That is not the genetics of autism's internal biological architecture. It is the genetics of the general neurodevelopmental liability gradient partitioned by questionnaire scores — which is precisely what dimensional models of neurodevelopmental liability predict.

Replication Does Not Settle Ontology

The SSC replication correlation of 0.927 is real. It is not what the paper implies it is. The SSC model was projected from a SPARK-trained GFMM onto overlapping features in a cohort with comparable demographic composition, comparable ascertainment logic, and comparable instrument structure. High correlation of class enrichment patterns in similar populations is evidence that the same solution can be recovered from similar data. It is not evidence that the four-class structure reflects the true underlying architecture of autism.

Independent replication requires a cohort that differs not just in its families but in its ascertainment method, its instruments, and its demographic composition. SPARK and SSC share registry-based recruitment, overlapping clinical referral pathways, and comparable demographic skew. A correlation of 0.927 between similarly constructed cohorts using overlapping instruments is strong evidence that the measurement apparatus is stable. It does not confirm that what the apparatus is measuring corresponds to the thing claimed.

A 2025 systematic review of latent class analysis across psychology — Sorgente et al., in Behavior Research Methods, reviewing 313 LCA studies — states explicitly that class enumeration is "a critical but subjective decision in LCA," and that no single correct number of classes exists that perfectly describes population diversity. Even solutions with high assignment consistency can have poor classification accuracy. All models are approximations of data at a specific moment.

IV. The Questionnaires Were Never Neutral

The entire model rests on three instruments. Before the cultural validity problems — which are documented and substantial — there is a more foundational issue: the people who built these instruments had already decided what autism was before they built anything. The tools encode those decisions. The paper used the tools to discover what autism is. That is the closed loop.

The SCQ — Social Communication Questionnaire. Built by Rutter, Bailey, and Lord in the 1990s. These are the same researchers who were central to defining what autism is in the diagnostic literature. They decided what autism was — fundamentally a deficit in social communication and interaction, measured against neurotypical standards. Then they built a tool to measure that deficit. The tool encodes their definition. Validated against the ADI-R — another instrument built on the same theoretical assumptions by the same research tradition — which means it was validated within a closed circle, not against independent evidence. Using it to discover what autism is is like using a map to prove the map is correct.

The RBS-R — Repetitive Behavior Scale Revised. Built by Bodfish and colleagues — but not designed specifically for autism. The RBS-R was developed to assess repetitive behaviours in people with intellectual disability, with and without ASD. Every single item has a negative valence. There is no neutral item. No positive one. The scale cannot ask why a behaviour is present. It records that it is there and how much.

The CBCL — Child Behavior Checklist. Built by Achenbach as a general psychopathology screener. Not designed for autism. Applied here to autistic children — who by definition sit outside the normative baseline the instrument was built around — and used to quantify how far outside. Heavily influenced by caregiver stress — a frightened or unsupported parent fills it in differently than a supported one. Cannot separate autism presentation from trauma response, from co-occurring conditions, from the effect of an unsupportive environment.

None of these instruments were built with autistic input. The measurement encodes the decisions of those who built it. The Litman paper used those measurements to discover the biological architecture of autism. The architecture it found was already inside the instruments before a single child was measured.

The paper then grouped the 239 items into seven phenotype categories imported directly from the same literature the instruments came from. The categories existed before the model ran. The model found what the questions were built to find and organised it into the structure the questions were built to produce.

The SCQ was normed predominantly on white, Western children. Bölte et al. (2008) documented cross-cultural validity concerns. Moody et al. (2017) found differential performance across demographic groups. Rescorla et al. (2011) showed significant variation across 24 countries in how parents rate identical behaviours — systematic, running along cultural lines. Cultural report bias is baked into the raw data, fed into a machine learning model, and named genetic programs.

The four classes replicate in the Simons Simplex Collection because SSC has the same demographic composition. Replicating a classification built on instruments with documented cultural validity problems in a cohort with the same demographic skew is not validation. It is confirmation that the same tools, applied to similar people, produce similar results.

Sensory processing differences — considered by many researchers and by autistic people themselves to be among the most defining and impactful features of autism — are substantially absent from the instruments as deployed here. A classification system for autism that does not adequately measure one of its most consistently reported features has a structural gap that replication cannot resolve.

V. Who Is Actually in the Cohort

Between 1990 and 2010, as autism diagnoses rose across the United States and Canada, diagnoses for intellectual disability fell in nearly equal measure. King and Bearman (2009) estimated that diagnostic substitution — children previously classified as intellectually disabled being reclassified as autistic — accounts for approximately one-quarter of the increase in autism prevalence. The Mixed ASD with DD class cannot be assumed to represent a uniform autism subtype. The design provides no mechanism to distinguish autistic people with co-occurring intellectual disability from people whose primary presentation involves intellectual disability reclassified into autism during a period of significant diagnostic boundary movement.

The paper's own extended data report the sex distribution across all four classes. It is remarkably uniform: approximately 75 to 80 percent male across all four. If the four classes were capturing meaningfully distinct biological subtypes, you would expect the sex distributions to differ across them — because the presentations that masking suppresses are precisely the presentations that define the lower-end classes. The uniform sex distribution across all four classes is consistent with all four being positions on the same continuous gradient, and inconsistent with four biologically distinct subtypes each capturing a different autism presentation. The paper does not acknowledge this implication.

The Validation Is Circular

The paper validates its classes using medical history diagnoses — rates of ADHD, anxiety, OCD, depression, and intellectual disability. But those diagnoses were made by clinicians working within the same deficit-based, white-Western-normative framework as the questionnaires used to build the classes. Independent validation requires data genuinely orthogonal to the training data. Reflecting the same assumptions through a different instrument is not independence.

Research consistently shows that approximately 70 to 80 percent of autistic people have at least one psychiatric condition across their lifetime. The research also consistently documents that these conditions are underrecognised in autistic populations — particularly in people with intellectual disability and communication differences. Diagnostic overshadowing means clinicians attribute psychiatric symptoms to the autism label rather than evaluating them independently. Communication barriers mean standard psychiatric diagnostic tools cannot access experience when communication is different or limited.

The populations the paper places in its highest-support-need classes are precisely the populations where psychiatric conditions are hardest to detect. The paper finds higher rates of ADHD, anxiety, and depression in lower-support-need classes. It presents this as evidence of distinct clinical profiles. But this pattern is also exactly what differential detection would produce — not fewer psychiatric conditions in the higher-support-need classes, but worse detection of conditions that are present at similarly high rates. The system measuring the problem is part of the problem.

VI. The Co-Occurring Disabilities the Model Cannot See

The paper's design treats the people in its cohort as a single-condition population. But neurodivergent people are not one thing. They are frequently many things simultaneously — autistic and ADHD, autistic and dyslexic, autistic and dyspraxic, and often several of these together, alongside acquired conditions, trauma histories, chronic illness, and physical disability. The 239 behavioural features the paper analyses are not measuring autism heterogeneity in such people. They are measuring the heterogeneous output of multiple intersecting neurodivergences that the instruments cannot separate.

Language Impairment. Language impairment is documented in roughly half of verbal autistic children. Developmental Language Disorder — a distinct neurodevelopmental condition with its own profile and intervention requirements — is common in autistic populations and produces low scores on every language-dependent questionnaire the model used. The instruments provide no mechanism to distinguish a child with DLD and moderate autism from a child with a more severe autism presentation and no DLD.

Childhood Apraxia of Speech. CAS combined with autism produces a child who is minimally verbal or nonverbal not because of cognitive or social impairment but because of motor planning failure for speech. The paper treats nonverbal status as a class feature. It is not. It is a heterogeneous presentation that can reflect completely different neurological mechanisms.

Catatonia. Vaquerizo-Serrano et al.'s 2021 meta-analysis found that 10.4 percent of individuals with ASD have catatonia. Ghaziuddin et al. (2021) found that among autistic adolescents experiencing late regression, catatonia was present in 85 percent of cases. A child in catatonic regression will produce a completely different questionnaire profile than their baseline. The model has no mechanism to detect catatonia. It reads the crisis presentation and assigns a class. That class receives a genetic program. The catatonia — a treatable medical condition with established interventions — is never named.

AAC Users and the Intellectual Disability Misclassification Problem. Many autistic people who use augmentative and alternative communication have full cognitive capacity. Their parent-report SCQ scores will reflect the instrument's design constraints rather than their actual profile. The classification therefore cannot distinguish an AAC user with full cognitive capacity from a child with global developmental impairment. A classification system that cannot distinguish between absent capacity and unreachable capacity has no business naming a genetic program for either.

There is now a documented and growing population of autistic people who were previously classified as having intellectual disability — placed in the lowest-functioning categories, regarded as having significant cognitive impairment — who are now known, through access to AAC and alternative assessment methods, not to have intellectual disability at all. Their cognitive capacity was present throughout. The instruments used to assess them could not reach it.

FASD. Fetal Alcohol Spectrum Disorder produces social communication difficulties, repetitive behaviours, attention problems, and developmental delays that overlap substantially with autism presentation on questionnaire measures. FASD is systematically underdiagnosed, particularly in children from lower-income families and Indigenous communities. The design cannot identify the extent to which FASD-related profiles may be contributing to class structure.

VII. The Moderate Challenges Class: Instrument Design, Not Biology

The paper did not identify a distinct biological program for the Moderate Challenges class. It identified a group that scored lower on three questionnaires and found that this group also had lower average genetic burden than the more heavily scored groups. Lower questionnaire scores correlating with lower average genetic burden is not a biological fingerprint. It is a quantitative position on a continuous gradient — the expected signature of a dimensional liability distribution that has been partitioned into groups.

A genuine biological subtype requires something beyond lower quantities of the same signals: a distinct pathway, a distinct mechanism, a distinct biomarker pattern that identifies this group as biologically separate rather than numerically lower. The paper does not find any of these for Moderate Challenges. What it finds is that the lower-scoring group scores lower genetically too. That is not a subtype. That is the lower end of the same instrument-defined gradient, given a name and a clinical label, and sent into use.

The Moderate Challenges class is the largest in the study at 1,860 people — 34 percent of the cohort. It is not autism-specific. It is the lower tail of whatever neurodevelopmentally diverse clinical population you put into this model, defined by relative absence of elevation on general instruments rather than by any positive autism-specific feature.

VIII. Borrowing the Language of Complexity

The paper uses the language of genomic medicine — programs, fingerprints, precision medicine, biologically distinct — to describe findings that genomic medicine's own standards do not support. A genetic program, in the molecular biological sense that generates interventions, names a pathway. BRCA1 names a DNA repair mechanism. HER2 names a receptor. mTOR names a signalling cascade. Each one makes the route from biology to treatment visible in the biology itself.

The Litman paper's genetic programs name developmental timing correlations — enrichments in gene sets expressed in prefrontal neurons during fetal development, distributed across four partitions of behavioural questionnaire scores. The absence of class-specific interventions is therefore not a timing problem awaiting further research. It is a signal about what was found. Gradient correlations between questionnaire score partitions and developmental gene expression timing enrichments do not generate class-specific interventions. The precision medicine framing has been placed around findings that do not yet have the biological resolution to support it. What precision means here is not what precision means in HER2 treatment. It is precision in the sense that the boxes have been drawn more carefully than before. The people sorted into them do not benefit from that precision in any way the paper has demonstrated.

IX. The Legal Architecture

The legal framework governing research on disabled people in the jurisdictions where this classification will be applied is specific. Article 4(1)(e) of the United Nations Convention on the Rights of Persons with Disabilities requires states to promote and undertake research on universal design in order to address the particular needs of persons with disabilities. Article 31 requires that states collect appropriate information, including statistical and research data, to enable them to formulate and implement policies to give effect to the Convention. General Comment No. 6 (2018) makes explicit that research involving disabled people must be conducted with the full and effective participation of persons with disabilities, through their representative organisations.

The paper did not include autistic people in its design. The instruments were chosen without autistic input. The classes were constructed without autistic input. The names were chosen without autistic input. Nothing about us without us is not a slogan. It is a legal principle embedded in the framework that governs this research in every jurisdiction that has ratified the CRPD.

The United Nations Declaration on the Rights of Indigenous Peoples — Articles 22, 23, and 24 — establishes that Indigenous peoples have the right to maintain, control, protect, and develop their cultural heritage, traditional knowledge, and traditional cultural expressions. The Truth and Reconciliation Commission of Canada's Calls to Action 18 through 24 address Indigenous health, including the right to determine their own health priorities. Indigenous autistic children and families appear in the SPARK cohort. Their data has been used to construct biological classification tiers with stated clinical applications. The paper does not address whether community-level partnership was part of the research design for Indigenous participants.

A clinician in a Canadian provincial health authority applying this classification to an Indigenous autistic child has no evidence in the source paper that the classification was designed in compliance with the standards under which they are required to operate. The silence is the problem, and it is a present problem, not a limitation to be corrected in follow-up work.

X. The People the Story Doesn't Follow Home

In the original Six Swans, the sister saves her brothers. Everyone else under a curse — everyone else trapped in the wrong form — the story does not acknowledge exists. The paper has exactly the same structure.

Deaf and Hard of Hearing People. Deaf children who communicate in sign, or who have auditory processing differences, score differently on every language-dependent measure — not because their autism is different, but because the instrument was built for hearing children. The SCQ has no validity data for Deaf populations.

Indigenous and First Nations Children. Indigenous autistic children may carry simultaneously higher rates of FASD from documented health inequities, higher rates of trauma from ongoing effects of colonial violence, different cultural relationships to eye contact and direct communication, and radically different access to diagnostic services. The instruments were not validated for these communities.

Blind and Low Vision Children. Blind children develop repetitive behaviours — known as blindisms — that score on the RBS-R in ways indistinguishable from autistic repetitive behaviours. Their presentations enter the classification and shape the genetic programs assigned to the classes that contain them.

Adults. The paper is entirely paediatric. Every genetic program identified says nothing about late-diagnosed adults whose difficulties were not visible in surface behaviour at assessment, or autistic adults who acquired additional disabilities through life.

An autistic adolescent under sustained stress, entering catatonic regression, scores as Broadly Affected during the crisis. The classification captures the crisis presentation, not the person. The catatonia is never identified because the instruments cannot identify it. It is not treated because it was never named.

They are in the cohort. Their data shaped the genetic programs. Their lives will be shaped by the clinical applications that follow. They are not in the story.

XI. What Happens When This Gets Applied

Classification systems from papers like this travel. They get cited in clinical guidelines, encoded in diagnostic protocols, used to determine funding eligibility, and built into the research that shapes how services are designed. The NAS has already identified the risk. The window between publication and clinical application is not hypothetical — it is open now.

Princeton University's official press release described the classes as "clinically and biologically distinct subtypes," framing them as advancing "precision diagnosis and care." Autism Speaks described the findings as "groundbreaking," presenting the four subtypes as confirmed biological entities. Scientific American's headline read "Four New Autism Subtypes Link Genes to Children's Traits." The authors themselves, speaking to Scientific American, acknowledged that "this classification is not a definitive, comprehensive grouping." That acknowledgement did not travel with the headline.

The separation the paper performs does not unlock new support. There are no class-specific interventions. The things that help autistic people — speech language therapy, treatment for catatonia, AAC, sensory accommodations, reduced demand environments, community support — none of those are delivered differently based on whether a child is Broadly Affected or Moderate Challenges. What the classification unlocks in the systems where it is already being cited is a tiering mechanism — a scientifically credentialed architecture for rationing what was already insufficient, in language that makes the rationing feel like precision rather than a choice.

In the Six Swans, the sister does not get to say she will finish the last shirt later while her brother stands at the stake with a wing already growing. The curse does not pause for future work. The shirt either covers the arm or it does not. The paper either sees the person or it does not. And the clinical applications follow the paper that exists, not the better one that is promised.

XII. "Science Is Iterative" Is Not a Defence of What Is Happening Now

The most common response to critiques of this kind is: science is iterative. The paper is a first step. Future work will refine the classes, improve the instruments, expand the cohorts. The response is reasonable as a description of how science works. It is not a defence of the specific problem the paper presents.

The problem is not that the paper is imperfect. The problem is that it presented itself as ready for clinical application before the evidence supported that claim. "Precision medicine" is not a hedge. "Biologically distinct subtypes" is not a preliminary finding. "Genetic programs" is not a tentative hypothesis. The clinical application language set expectations the evidence cannot meet. And the classification is already travelling — in press releases, in clinical summaries, in the citations researchers and clinicians use to anchor their thinking — in the form that was presented, not in the form the evidence supported.

The limitations documented throughout this critique were available to be known before this paper was designed. The SCQ's cross-cultural validity problems were published in 2008. The systematic underestimation of cognitive capacity in autistic children was documented by Dawson et al. in 2007. The masking literature is a decade old. The diagnostic substitution data is from 2009. None of this required access to unpublished research. It was in the literature. The paper does not engage with it.

Science being iterative means the next paper can do better. It does not mean the current paper's clinical consequences pause while the iteration happens. The brother with the wing does not get a follow-up study. The child whose crisis was classified as a genetic program does not get a correction issued to their school file when the validation studies eventually report. The classification is already in the room. The iterative correction is not.

XIII. The Brother With the Wing

In the Brothers Grimm version of the Six Swans, the sister almost makes it. Six years of silence. Accused of murdering her own children. Led to the stake. At the last moment the shirts are finished, the brothers transform, the curse breaks.

One brother still has a swan's wing instead of an arm. The last shirt wasn't quite done in time.

The story calls this the happy ending.

The disability research debate works exactly like this. The people doing the labour — autistic self-advocates, disabled community researchers, clinicians who have been saying for years that the instruments are wrong, that the cohorts are biased, that you are missing us and our children — watch a Nature Genetics paper land with a replication correlation of 0.927 and wide institutional backing. And everyone applauds.

The four classes are real in the same way the brothers are real. They are real things, genuinely seen, genuinely there. But they are seen in the wrong form. The model is looking at swans and writing papers about swan biology and developing genetic programs for different kinds of swans, and the sister has been sewing for six years knowing that under the feathers there are people — and some of them are not even her brothers, and some of them were never cursed by the same spell at all.

The Deaf child. The child with FASD in an Indigenous community whose specific health priorities were not part of the research design. The child with Cerebral Palsy whose motor patterns entered someone else's repetitive behaviour profile. The child in catatonic regression whose crisis was classified and named as a genetic program. The child with language impairment whose condition is invisible inside someone else's nonverbal presentation. The autistic person whose communication difference is real and present across the entire spectrum but whose communication modality — AAC, atypical verbal communication — is not legible to an instrument designed for surface verbal social behaviour, and who therefore scores in the lower tier and receives the lower resources.

They are in the cohort. Their data shaped the genetic programs. Their lives will be shaped by the clinical applications that follow.

They are not in the story.

The question is not whether this paper is good science by the narrow technical standards of its own field. In many respects it is. The question is what it means to do good science on a population you have not actually seen, with instruments that cannot hold the complexity of what you are measuring, inside a framework that treats the absence of someone's story as data rather than as a failure of the research design.

The accountability mechanisms that govern this paper — peer review, IRB approval, institutional oversight, citation metrics — are accessible to researchers and structurally inaccessible to the people the research classifies. That asymmetry is not incidental to the critique. It is part of what the critique is about.

Autism Speaks endorsed this paper. The National Autistic Society — the organisation that represents autistic people — contested it. Those two responses are not two sides of a scientific debate. They are a description of whose framework this paper inhabits and whose community it did not include in building it.

The brother with the wing does not get a follow-up study.

The sister knew. She always knew. The question is who gets to be the sister — and whether the people doing the research are willing to sit in silence long enough to listen to her.

References

- Litman A, Sauerwald N, Green Snyder L et al. (2025). Decomposition of phenotypic heterogeneity in autism reveals underlying genetic programs. Nature Genetics, 57(7), 1611–1619. DOI: 10.1038/s41588-025-02224-z

- Brown J. (2026, March 9). Challenging misinformation about autism: using evidence to correct false claims. National Autistic Society. autism.org.uk

- Volkmar FR & McPartland JC. (2014). From Kanner to DSM-5: autism as an evolving diagnostic concept. Annual Review of Clinical Psychology, 10, 193–212.

- Hyman SE. (2010). The diagnosis of mental disorders: the problem of reification. Annual Review of Clinical Psychology, 6, 155–179.

- Insel T. (2013). Transforming Diagnosis. National Institute of Mental Health Director's Blog, April 29, 2013.

- Sinha P, Calfee CS, Delucchi KL. (2021). Practitioner's Guide to Latent Class Analysis. Critical Care Medicine, 49(1), e63–e79.

- Sorgente A et al. (2025). A systematic review of latent class analysis in psychology. Behavior Research Methods, 57, 301.

- Dawson G, Soulieres I, Gernsbacher MA, Mottron L. (2007). The level and nature of autistic intelligence. Psychological Science, 18(8), 657–662.

- King MD & Bearman PS. (2009). Diagnostic change and the increased prevalence of autism. International Journal of Epidemiology, 38(5), 1224–1234.

- Botha M, Chapman R, Giwa Onaiwu M et al. (2024). The neurodiversity concept was developed collectively. Autism, 28(6), 1591–1594.

- Bölte S, Holtmann M, Poustka F. (2008). The Social Communication Questionnaire as a screener for autism spectrum disorders: additional evidence and cross-cultural validity. JAACAP, 47(6), 719–720.

- Moody EJ et al. (2017). Screening for Autism with the SRS and SCQ: Variations across Demographic, Developmental and Behavioral Factors. J Autism Dev Disord, 47(11), 3550–3561.

- Rescorla LA et al. (2011). International comparisons of behavioral and emotional problems in preschool children. J Clinical Child & Adolescent Psychology, 40(3), 456–467.

- Mandell DS et al. (2007). Disparities in diagnoses received prior to a diagnosis of autism spectrum disorder. J Autism Dev Disord, 37(9), 1795–1802.

- Vaquerizo-Serrano J et al. (2021). Catatonia in autism spectrum disorders: A systematic review and meta-analysis. European Psychiatry, 65(1), e4.

- Ghaziuddin M, Ghaziuddin N, Greden J. (2021). Catatonia: A Common Cause of Late Regression in Autism. Frontiers in Psychiatry, 12, 674009.

- Wing L & Shah A. (2000). Catatonia in autistic spectrum disorders. British Journal of Psychiatry, 176, 357–362.

- Loucas T et al. (2008). Autistic symptomatology and language ability in ASD and specific language impairment. JCPP, 49(11), 1184–1192.

- Bishop DVM et al. (2017). Phase 2 of CATALISE. PLOS ONE, 12(7), e0181369.

- Lai MC et al. (2019). Prevalence of co-occurring mental health diagnoses in the autism population. Lancet Psychiatry, 6(10), 819–829.

- Milner V, McIntosh H, Colvert E, Happé F. (2019). A Qualitative Exploration of the Female Experience of ASD. J Autism Dev Disord, 49(6), 2389–2402.

- Pellicano E & den Houting J. (2022). Shifting from 'normal science' to neurodiversity in autism science. JCPP, 63(4), 381–396.

- Milton DEM. (2012). On the ontological status of autism: the 'double empathy problem.' Disability & Society, 27(6), 883–887.

- United Nations Convention on the Rights of Persons with Disabilities (CRPD). 2006. Articles 1, 3(d). General Comment No. 6 (2018).

- United Nations Declaration on the Rights of Indigenous Peoples (UNDRIP). 2007. Articles 22, 23, 24.

- Truth and Reconciliation Commission of Canada. Calls to Action. 2015. Calls 18–24.

- Sister Grim. (2026a). The Fairy Tale Version. Very Autistic and Other Tales. crapwematter.com

- Sister Grim. (2026b). The Theory Produced the Results. humansupportnetwork.org

- Lord C et al. (2012). A multisite study of the clinical diagnosis of different autism spectrum disorders. Archives of General Psychiatry, 69(3), 306–313.

- Wing L & Gould J. (1979). Severe impairments of social interaction and associated abnormalities in children. J Autism Dev Disord, 9(1), 11–29.

- Laxman DJ et al. (2019). Loss in services precedes high school exit for teens with autism spectrum disorder. Autism Research, 12(6), 911–921.

The plain-language companion to this document —

What They Actually Did — covers the same ground without the technical scaffolding, for families, advocates, and anyone who received one of these labels.

Sister Grim — Very Autistic and Other Tales / Human Support Network — April 2026